31 Electromagnetic transitions

Some of the previous examples we’ve considered have been only partly quantum mechanical: we’ve used a quantum treatment of matter (e.g. atoms), but a classical treatment of electromagnetism as a background field. This is known as a semi-classical treatment. A fully consistent description requires quantizing the electromagnetic field itself, leading eventually to quantum electrodynamics. But there are many useful results we can obtain with the semi-classical approach, including a complete description of what experimental measurements observe in terms of spectral lines: light emitted in specific transitions between energy levels, whose wavelengths tell us the energy splittings and whose intensities tell us the transition rates.

31.1 Electromagnetism in CGS units

Since we’ll be relying heavily on electromagnetism, let’s write out some basics in the CGS (a.k.a. “Gaussian”) units commonly used for quantum mechanics, including by Sakurai. Maxwell’s equations in CGS are as follows: \begin{aligned} \nabla \cdot \vec{E} &= 4\pi \rho(\vec{x}) \\ \nabla \cdot \vec{B} &= 0 \\ \nabla \times \vec{E} + \frac{1}{c} \frac{\partial \vec{B}}{\partial t} &= 0 \\ \nabla \times \vec{B} - \frac{1}{c} \frac{\partial \vec{E}}{\partial t} &= \frac{4\pi}{c} \vec{J} \\ \end{aligned} and the Lorentz force law for the force on a charge q is \vec{F} = q\vec{E} + \frac{q}{c} \vec{v} \times \vec{B} picking up an extra factor of c relative to SI. It will be often be useful to write our fields in terms of the electromagnetic potentials \phi and \vec{A}, which are related to the fields as \begin{aligned} \vec{E} &= -\nabla \phi - \frac{1}{c} \frac{\partial \vec{A}}{\partial t}, \\ \vec{B} &= \nabla \times \vec{A}. \end{aligned} We know that the potentials are only defined up to gauge transformations, so we can pick a convenient gauge; we will adopt Coulomb gauge, \nabla \cdot \vec{A} = 0. For our current discussions, the most important solution to these equations is that for radiation in free space, so with \rho = \vec{J} = 0. In free space with \rho = 0 and with the Coulomb gauge condition, the scalar potential satisfies the equation \nabla^2 \phi = 0, which implies simply that \phi = 0. (The combination of \nabla \cdot \vec{A} = 0 and \phi = 0 is sometimes known as “radiation gauge”, although it’s really just Coulomb gauge with some extra conditions.)

With these conditions, the Maxwell equations imply that the vector potential itself satisfies the wave equation, \nabla^2 \vec{A} = \frac{1}{c^2} \frac{\partial^2 \vec{A}}{\partial t^2}, which yields the solution \vec{A}(\vec{x}, t) = A_0 \vec{\epsilon} \cos (\vec{k} \cdot \vec{x} - \omega t) where A_0 gives the field strength, \omega = c|\vec{k}| and \vec{\epsilon} is a unit polarization vector (so |\epsilon|^2 = 1.) Applying the Coulomb gauge condition to this solution gives \vec{k} \cdot \vec{\epsilon} = 0, so that the polarization vector must be transverse to the wave vector \vec{k} and has only two independent degrees of freedom. Going forwards to the electric and magnetic fields, we have for radiation \begin{aligned} \vec{E} &= -\frac{\omega}{c} A_0 \vec{\epsilon} \sin (\vec{k} \cdot \vec{x} - \omega t), \\ \vec{B} &= -A_0 (\vec{k} \times \vec{\epsilon}) \sin (\vec{k} \cdot \vec{x} - \omega t). \end{aligned}

An important quantity is the energy density u, which is given in terms of the electric and magnetic fields by u = \frac{1}{8\pi} (|\vec{E}|^2 + |\vec{B}|^2). Substituting in using the fields above, we have for free radiation u = \frac{|A_0|^2}{8\pi} \left( \frac{\omega^2}{c^2} |\vec{\epsilon}|^2 + |\vec{k} \times \vec{\epsilon}|^2 \right) \sin^2 (\vec{k} \cdot \vec{x} - \omega t) \\ = \frac{|A_0|^2}{8\pi} \left( \frac{\omega^2}{c^2} + |\vec{k}|^2 \right) \sin^2 (\vec{k} \cdot \vec{x} - \omega t) We can simplify this by identifying \omega = c|\vec{k}| and then by doing a time average, which reduces the \sin^2(...) \rightarrow 1/2, leaving us with \bar{u} = \frac{|A_0|^2 \omega^2}{8\pi c^2}.

Thus, if we are dealing with radiation with a known energy density \bar{u}, we can invert this equation to find the field normalization |A_0|, which will be useful below.

Since I find it confusing myself (partly due to an accumulation of somewhat odd definitions due to historical precedents, especially relating to astronomy), here I collect together some definitions related to the question: “how much light is there in our system?” (The relevant Wikipedia page may help, although it sets my personal record for how many times I’ve seen the word “confusing” or “confusion” appearing on one article…)

In statistical mechanics, a natural choice might be the energy density, with units of energy / volume. In the context of electromagnetism, this is typically denoted as u. For a thermal bath of photons, energy density is described by the Planck distribution, u_\omega(\omega, T) = \frac{8\pi h\nu^3}{c^3} \frac{1}{e^{h\nu / (k_B T)} - 1} = \frac{\hbar \omega^3}{\pi^2 c^3} \frac{1}{e^{\hbar \omega/(k_B T)} - 1} converting between the ordinary frequency \nu and angular frequency \omega which we prefer to use in quantum mechanics. Except, this is not the energy density, this is the spectral energy density, which is the energy per volume, per unit frequency. It is a differential quantity, meaning that we integrate it over frequency to get the ordinary energy density: u = \int d\omega\ u_\omega(\omega).

However, this isn’t always the most convenient quantity to use. If you’re interested in the light radiated by a star, what the photons are doing inside the star is irrelevant; only the photons emitted from the surface of the star are of interest. Similarly, in a quantum experiment we often have the situation where light is propagating freely through space for a long time, before interacting with a small target in some way. In either case, we want to remove one dimension (the interior of the star, or the direction of propagation of the light) and instead talk about energy per unit area.

In the context of stars, the spectral energy density can be conveniently replaced with a quantity known as the spectral radiance B_\omega, which describes energy per area, per unit time. The are related by B_\omega = \frac{c}{4\pi} u_\omega = \frac{\hbar \omega^3}{4\pi^3 c^2} \frac{1}{e^{\hbar \omega/(k_B T)}-1} with the 4\pi coming from a solid-angle integration. This is once again a differential quantity; integrating it gives B = \int d\omega\ B_\omega which is just the radiance, units of energy / area. (Strictly speaking, the SI units are energy / area / steradian, to help disambiguate the point I’m about to make, although I never liked solid angles as “units”…)

In the present context, we’re not interested in stars; in the second context mentioned of illuminating a target with a beam of light, we more naturally want to normalize to the area of our target/beam, and the integration over the full solid angle doesn’t make sense. This gives a slight but important difference in our definitions, which basically amounts to discarding the 4\pi from solid-angle integration but otherwise keeping our units fixed. This defines the more relevant quantity for us, which is the spectral irradiance I_\omega, given by I_\omega = 4 \pi B_\omega = \frac{\hbar \omega^3}{\pi^2 c^2} \frac{1}{e^{\hbar \omega/(k_B T)} - 1}. which is once again differentially related to the plain irradiance, I = \int d\omega\ I_\omega. This is in physics often simply called intensity, although “intensity” has been used for a few too many things in science, so “irradiance” is more specific if you want to be careful.

31.2 The electromagnetic perturbation

In quantum mechanics, the appropriate Hamiltonian to include electromagnetism for a charge q with mass m is \hat{H} = \frac{1}{2m} \left( \hat{\vec{p}} - \frac{q}{c} {\vec{A}} \right)^2 + V_0(\hat{x}). Note that we put hats on the momentum and position operators, but not on the gauge potential since we’re working semi-classically and treating it as a background field, so we don’t have to worry about gauge invariance or kinematical momentum operators.

There is a term missing from the Hamiltonian I’ve written above, one which is rather important and that we’ve seen before, as long as our charge has spin, which is the coupling of the spin magnetic moment to the magnetic field: \hat{V}_\mu = -\hat{\vec{\mu}} \cdot \hat{\vec{B}} = -\frac{q}{mc} \hat{\vec{S}} \cdot \vec{B}.

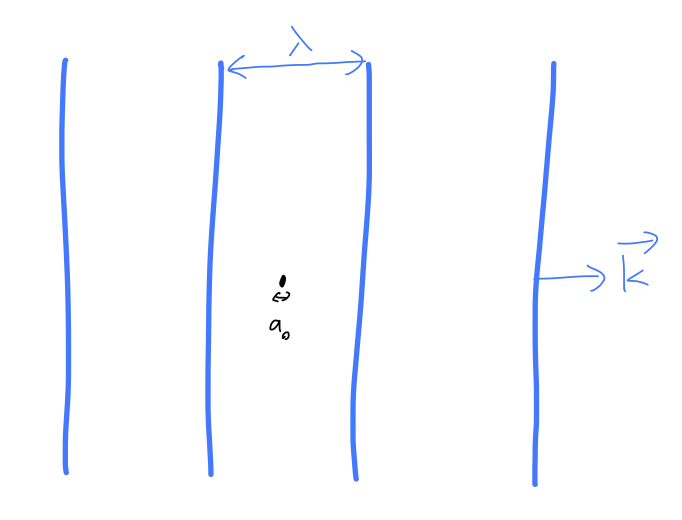

Why am I not writing this above? In general, we should include it, but we can also make a quick estimate of how large this is compared to the term that we are keeping, in the context of our radiation solution. The size of the largest term in the Hamiltonian above is E_1 \sim \frac{q}{mc} \vec{p} \cdot \vec{A} \sim \frac{q p A_0}{mc} while the magnetic term corresponds to an energy of order E_2 \sim \frac{q}{mc} \vec{S} \cdot \vec{B} \sim \frac{\hbar k q A_0}{mc} and if we take the ratio, \frac{E_2}{E_1} \sim \frac{\hbar k}{p}. So we end up with a ratio of momentum scales, but what momenta are they? \hbar k is the momentum associated with the EM wave, corresponding to a wavelength of \lambda = (2\pi/k). On the other hand, p is the momentum of the atomic electron; its characteristic wavelength will be roughly set by the Bohr radius, so p/\hbar \sim 2\pi/a_0. Thus, \frac{E_2}{E_1} \sim \frac{a_0}{\lambda}.

The Bohr radius a_0 is about 0.05 nm, whereas typical photon wavelengths from atomic transitions are of order hundreds or thousands of nm. So at least for an initial, first-order perturbative treatment, we are certainly justified in ignoring the magnetic moment interaction. This observation will also be useful for some other approximations that we’ll make below.

(This is, schematically, the difference between the de Broglie wavelength of an electron with 10 eV of energy and the wavelength of a photon with 10 eV of energy. Up to factors of c, the Bohr radius is set by an energy scale which is the geometric mean of 10 eV with the electron rest-mass energy scale of 511 keV.)

We can separate out the electromagnetic parts to treat them as a perturbation. Expanding the squared term and working in Coulomb gauge (where \nabla \cdot \vec{A} = 0 means that \hat{\vec{p}} and \vec{A} commute), and dropping the |\vec{A}|^2 term which is second-order in the field strength, we find \hat{V} \approx -\frac{q}{mc} \vec{A} \cdot \hat{\vec{p}} = -\frac{qA_0}{mc} \cos (\vec{k} \cdot \hat{\vec{x}} - \omega t) (\vec{\epsilon} \cdot \hat{\vec{p}}).

This is a harmonic perturbation, of the type we studied previously using time-dependent perturbation theory. However, there is a new feature: the spatial dependence \vec{k} \cdot \hat{\vec{x}} means that when we split the cosine into two complex exponentials, the perturbation matrix elements for the absorption and emission terms are not the same. Defining the “matrix-element integral” M_{fi}(\omega) = \int d^3x\ e^{+i(\vec{n} \cdot \vec{x}) \omega/c} \psi_f^\star(\vec{x}) (\vec{\epsilon} \cdot \nabla) \psi_i(\vec{x}) with \vec{n} = \vec{k}/|\vec{k}| the propagation direction, the perturbation matrix elements for the two terms are V_{fi}^{(+)} = -\frac{i\hbar qA_0}{mc} M_{fi}(\omega), \qquad V_{fi}^{(-)} = -\frac{i\hbar qA_0}{mc} M_{fi}(-\omega). The (+) term corresponds to absorption (E_f = E_i + \hbar\omega) and the (-) term to stimulated emission (E_f = E_i - \hbar\omega), exactly as in our general treatment. We can thus apply our previous rate formulas directly; for example, the absorption rate is W_{fi}^{(+)} = \frac{\pi}{2\hbar} \int dE_f |V_{fi}^{(+)}|^2 \rho_f(E_f) \delta(E_f - E_i - \hbar \omega).

Writing |V_{fi}^{(+)}|^2 in terms of the energy density using |A_0|^2 = 8\pi c^2 \bar{u}/\omega^2, we can express the matrix element as |V_{fi}^{(+)}|^2 = \frac{8\pi \hbar^2 q^2}{m^2 \omega^2} \bar{u}(\omega) |M_{fi}(\omega)|^2, trading the field normalization for the energy density \bar{u}(\omega) of our monochromatic radiation. Analogous expressions hold for the stimulated emission rate with M_{fi}(-\omega) and sign-reversed energy conservation.

31.3 Spontaneous emission rate

Our treatment of absorption and stimulated emission above relies on the presence of an external EM field. But we should also be able to describe spontaneous emission, in which an atom decays to a lower state by emitting a photon with no external field present. Semi-classically, we think of the perturbation as being provided by the electromagnetic field of the emitted photon itself. The perturbation takes the same form: \hat{V}_\gamma = -\frac{qA_\gamma}{mc} \cos (\vec{k} \cdot \hat{\vec{x}} - \omega t) (\vec{\epsilon} \cdot \hat{\vec{p}}). The only difference is the field strength |\vec{A}|. For stimulated processes, the energy density \bar{u} was an externally specified constant. For spontaneous emission, the energy in the field should correspond to a single photon. Working in a box of volume L^3 (expecting L-dependence to cancel), we impose E = \hbar \omega = \bar{u} L^3 giving the single-photon normalization |A_\gamma|^2 = \frac{8\pi c^2}{\omega^2} \bar{u} = \frac{8\pi \hbar c^2}{\omega L^3}.

We also need a density of states for the emitted photon, since in spontaneous emission the direction of \vec{k} is arbitrary and must be summed over. Taking our box to have periodic boundaries, the photon mode number relates to the wave vector as \vec{k} = (2\pi/L) \vec{n}, giving \int d^3n = \left( \frac{L}{2\pi} \right)^3 \int d^3k = \left( \frac{L}{2\pi} \right)^3 \int d\Omega_k \int dk\ k^2. Using the relativistic dispersion relation E = \hbar c k for photons, this becomes \int d^3n = \left(\frac{L}{2 \pi \hbar c} \right)^3 \int d\Omega_k \int dE\ E^2, from which we can read off the density of states (per solid angle) as \rho_\gamma(E) = L^3 E^2 / (8\pi^3 \hbar^3 c^3).

Putting everything together in Fermi’s golden rule, with both the photon and atomic final-state densities included, we find W_{\gamma,fi}^{(-)} = \frac{2\pi}{\hbar} \int d\Omega_k \int dE_\gamma \int dE_f \rho_f(E_f) |V_{\gamma,fi}^{(-)}|^2 \frac{L^3}{8\pi^3 \hbar^3 c^3} E_\gamma^2 \delta(E_f - E_i + E_\gamma). Plugging back in for |V_{\gamma,fi}^{(-)}|^2 introduces, among other factors, |A_\gamma|^2 \sim 1/L^3, so that as expected the dependence on the fictitious box size L cancels. We find for the differential emission rate \frac{dW_{\gamma,fi}^{(-)}}{d\Omega_k} = \frac{\hbar q^2}{2\pi c^3 m^2 \omega} \int dE_f \rho_f(E_f) |M_{fi}(-\omega_{if})|^2 \omega_{if}^2 with \omega_{if} \equiv (E_i - E_f)/\hbar > 0. The angular integral cannot always be done trivially, since the matrix element M_{fi} can depend on the photon direction \vec{n}.

31.4 Dipole approximation

Now let’s simplify the matrix-element integral M_{fi}(\omega). Earlier we justified dropping the electron spin contribution to the electromagnetic Hamiltonian by arguing that it is negligible because typical radiation wavelengths are much larger than atomic sizes, \lambda \gg a_0:

This same separation of scales means that over the distance scales of an atomic interaction, we can treat the electric and magnetic fields as spatially constant. This lets us replace e^{i\vec{k} \cdot \vec{x}} \rightarrow 1 in the matrix-element integral: M_{fi}(\omega) \approx \int d^3x \psi_f^\star(\vec{x}) (\vec{\epsilon} \cdot \nabla) \psi_i(\vec{x}). Notice that this replacement removes all of the \omega dependence from the integral. The remaining integral can be simplified with a nice trick that requires us first to lift back out of position space: M_{fi} = \vec{\epsilon} \cdot (\bra{f} \frac{i}{\hbar} \hat{\vec{p}} \ket{i}). Now, we can exploit the fact that \ket{i} and \ket{f} are energy eigenstates, together with remembering the fact that for any Hamiltonian of the form \hat{p}^2 / (2m) + V(\hat{x}), the Heisenberg equation of motion for \hat{\vec{x}} is especially simple: \hat{\vec{p}} = \frac{-i}{\hbar} m [\hat{\vec{x}}, \hat{H}] This lets us rewrite: \bra{f} \frac{i}{\hbar} \hat{\vec{p}} \ket{i} = \frac{m}{\hbar^2} \bra{f} [\hat{\vec{x}}, \hat{H}] \ket{i} = -\frac{m \omega_{fi}}{\hbar} \bra{f} \hat{\vec{x}} \ket{i}.

Thus, we have the result:

For radiation interacting with matter in the case where the radiation wavelength \lambda is very large compared to the size of the object, the matrix element for a transition \ket{i} \rightarrow \ket{f} is given by the dipole transition matrix element, M_{fi} = \frac{i}{\hbar} \bra{f} \vec{\epsilon} \cdot \hat{\vec{p}} \ket{i} = -\frac{m \omega_{fi}}{\hbar} \bra{f} \vec{\epsilon} \cdot \hat{\vec{x}} \ket{i} = -\frac{m \omega_{fi}}{\hbar} \vec{\epsilon} \cdot \int d^3x \psi_f^\star(\vec{x}) \vec{x} \psi_i(\vec{x}).

Since we have a couple of frequencies running around and it can be confusing, I’ll emphasize that this has become independent of the radiation frequency \omega; the frequency \omega_{fi} showed up through the Hamiltonian acting on the energy levels \ket{i}, \ket{f}.

This is called “dipole approximation” since the matrix element \bra{f} \hat{\vec{x}} \ket{i} is the same one that would show up for a charged dipole interacting with a background electric field. As we have discussed, it is a natural approximation to use for atomic transitions due to the separation of scales \lambda \gg a_0. It also happens to be exactly the approximation we would want to make in order to make sure our semiclassical treatment of light is valid! Long wavelengths mean (as we’ve seen) that the EM field is approximately static in our interactions, and the fact that the field is really quantized into photons is only really relevant when we consider its dynamics.

Another way to think of dipole approximation is that it comes from series expansion of the object e^{i\vec{k} \cdot \vec{x}} appearing inside of M_{fi}: \bra{f} e^{\pm i\vec{k} \cdot \vec{x}} (\vec{\epsilon} \cdot \hat{\vec{p}}) \ket{i} \approx \bra{f} \left(1 \pm i\vec{k} \cdot \hat{\vec{x}} - \frac{1}{2} |\vec{k} \cdot \hat{\vec{x}}|^2 + ...\right) (\vec{\epsilon} \cdot \hat{\vec{p}}) \ket{i}

Dipole approximation amounts to keeping just the (1) term in the expansion; transitions due to this coupling are also known as “E1” transitions in e.g. quantum chemistry literature, “E” for electric and “1” for the leading term. However, as we’ve already seen before (see Section 27.3), in some cases (depending on the specific states \ket{i}, \ket{f}) the dipole matrix element \bra{f} \vec{x} \ket{i} vanishes due to symmetry.

In such cases, as long as we still have \vec{k} \cdot \vec{x} \ll 1, the dominant contribution will come from keeping the first non-vanishing term in the expansion. In particular, the next term in the expansion, \pm i(\vec{k} \cdot \hat{\vec{x}})(\vec{\epsilon} \cdot \hat{\vec{p}}), can be decomposed into a symmetric and antisymmetric part under exchange of \vec{k} and \vec{\epsilon}. The antisymmetric part gives rise to magnetic dipole (M1) transitions, coupling to the orbital angular momentum \hat{\vec{L}}, while the symmetric (traceless) part gives electric quadrupole (E2) transitions (which we discussed a bit back in Chapter 28). Both are suppressed relative to E1 by a factor of order a_0/\lambda.

31.4.1 Spontaneous emission rate in dipole approximation

Applying the dipole approximation to the spontaneous emission rate, we need to sum over the two photon polarization directions and integrate over the photon emission angle. For the polarization sum, we can derive a useful identity: given any vector \vec{v} and two polarization vectors \vec{\epsilon}_1, \vec{\epsilon}_2 orthogonal to \vec{k}, \sum_{\epsilon} |\vec{\epsilon} \cdot \vec{v}|^2 = |\vec{v}|^2 - \frac{|\vec{v} \cdot \vec{k}|^2}{|\vec{k}|^2} = |\vec{v}|^2 (1 - \cos^2 \theta_k), where \theta_k is the opening angle between \vec{v} and \vec{k}.

For spontaneous emission, because the photon is only in the final state, we sum over polarizations and emission directions. But for absorption, where the initial-state polarization is unknown, we would instead average (sum with an extra factor of 1/2.)

We can understand the difference in terms of density matrices. Classically, the polarization of our radiation is represented by a normalized two-component vector, \vec{\epsilon} = a \vec{\epsilon}_1 + b \vec{\epsilon}_2. In quantum mechanics, this means that the polarization is described by a two-state Hilbert space spanned by \ket{\epsilon_1} and \ket{\epsilon_2}. Unpolarized light in quantum mechanics, then, is light with a density matrix which represents maximum uncertainty about the polarization vector: \hat{\rho}_{\rm unpol} = \frac{1}{2} \ket{\epsilon_1}\bra{\epsilon_1} + \frac{1}{2} \ket{\epsilon_2}\bra{\epsilon_2} If we have an observable that depends on polarization, we thus see that its expectation value is given by {\rm Tr} (\hat{\rho}_{\rm unpol} \hat{O}(\epsilon)) = \frac{1}{2} \sum_{\epsilon} \left\langle \hat{O}(\epsilon) \right\rangle.

In other words, as we’ve learned from density matrices, classical uncertainty about polarization in the initial state results in an average over the polarization vector in our observable.

On the other hand, if we don’t know the final-state polarization of our radiation, that simply means that we’re not measuring it, which means that whatever measurement we are doing acts as the identity matrix on the polarization subspace: \hat{O}_{\rm unpol} = \hat{O} \otimes \hat{1}_{\epsilon} = \hat{O} \otimes (\ket{\epsilon_1}\bra{\epsilon_1} + \ket{\epsilon_2}\bra{\epsilon_2}) which is a sum, not an average - so no extra factor to normalize.

The rule of thumb to remember is, generally: average over initial states, sum over final states.

Applying the polarization sum and doing the angular integration over d\Omega_k, we find the total spontaneous emission rate for a transition between two discrete states: W_{\gamma,fi}^{(-)} = \frac{\hbar \omega q^2}{2\pi m^2 c^3} \int d\Omega_k \sum_{\epsilon} |M_{fi}(\omega)|^2 \\ = \frac{\omega^3 q^2}{2\pi \hbar c^3} \int d\Omega_k (1 - \cos^2 \theta_k) |\bra{f} \hat{\vec{x}} \ket{i}|^2 \\ = \frac{4\omega^3 q^2}{3\hbar c^3} |\bra{f} \hat{\vec{x}} \ket{i}|^2, where the \int d\Omega_k (1 - \cos^2 \theta_k) = 8\pi/3.

In an earlier version of these notes, there was much more detailed discussion of connecting these calculations to Einstein coefficients, part of Einstein’s original statistical-mechanics argument about matter interacting with radiation. In particular, from this result and the other expressions above we can verify that the ratio A/B of spontaneous to stimulated emission rates matches the prediction from Einstein’s original statistical-mechanics argument, A_{21}/B_{21} = \hbar \omega^3 / (\pi^2 c^3).

I may add an appendix to be linked here with more details to these notes later.

The differential rate dW/d\Omega_k before the angular integration also carries useful information about the angular distribution of emitted radiation, which depends on the quantum numbers of the states involved in the transition.

31.5 Spontaneous emission in hydrogen-like atoms

Now let’s apply these results to hydrogen and hydrogen-like atoms. Working in dipole approximation, the initial-state and final-state wavefunctions can be decomposed according to their quantum numbers as \psi_{nlm}(\vec{r}) = R_{nl}(r) Y_l^m(\theta, \phi). Our result above for the total spontaneous emission rate holds; setting the charge q = e and plugging in the fine-structure constant \alpha = e^2/(\hbar c), we can usefully rewrite \frac{dW_{i \rightarrow f}}{d\Omega_k} = \frac{\omega^3 e^2}{2\pi \hbar c^3} \sum_{\epsilon} |\bra{f} \vec{\epsilon} \cdot \hat{\vec{r}} \ket{i}|^2 for the angular differential rate, or W_{i \rightarrow f} = \frac{4\alpha \omega^3}{3c^2} |\bra{f} \hat{\vec{r}} \ket{i}|^2 for the total rate.

The matrix element needed for a dipole transition from \ket{i} = \ket{n_i l_i m_i} to \ket{f} = \ket{n_f l_f m_f}, then, is \bra{f} \hat{\vec{r}} \ket{i} = \bra{n_f l_f m_f} \hat{\vec{r}} \ket{n_i l_i m_i}. Here we’ll work in a spherical basis, writing \vec{r} = \sum_q \hat{e}_q^\star r_q^{(1)} where the spherical components of the position vector are, as a reminder, r_q^{(1)} = \sqrt{\frac{4\pi}{3}} r Y_1^q(\theta, \phi). Then we have for the component matrix elements \bra{n_f l_f m_f} \hat{r}_q^{(1)} \ket{n_i l_i m_i } = \int dr\ R_{n_f l_f}^\star(r) R_{n_i l_i}(r) r^3 \int d\Omega Y_{l_f}^{m_f}{}^\star(\theta, \phi) \sqrt{\frac{4\pi}{3}} Y_1^q(\theta, \phi) Y_{l_i}^{m_i}(\theta \phi) \\ = I_R \sqrt{\frac{(2l_i+1)}{(2l_f+1)}} \left\langle l_i 1;00 | l_i 1;l_f 0 \right\rangle \left\langle l_i 1;m_i q | l_i 1;l_f m_f \right\rangle, writing I_R for the result of the radial integral, and using the identity we derived for triple spherical-harmonic integrals in Section C.0.1. The selection rules implied by the Clebsch-Gordans and by parity are (see discussion in Section 27.3) l_f - l_i = \pm 1; \\ |m_f - m_i| \leq 1.

So far, this is also written for a single transition between two energy eigenstates, but often we want to sum over several degenerate states, for example to compute an overall 2p \rightarrow 1s transition rate by summing over the m_i and m_f. In all such cases, we know the rule: we average over all initial states and sum over all final states. So for example, W_{n_i l_i \rightarrow n_f l_f} = \frac{4\alpha \omega^3}{3c^2} \frac{1}{2l_i + 1} \sum_{m_i} \sum_{m_f} |\bra{n_f l_f m_f} \hat{\vec{r}} \ket{n_i l_i m_i}|^2 \\ = \frac{4\alpha \omega^3}{3c^2} \frac{1}{2l_i + 1} \sum_{m_i} \sum_{m_f} \sum_{q=-1}^{1} |\bra{n_f l_f m_f} \hat{r}_q^{(1)} \ket{n_i l_i m_i}|^2 passing to spherical tensor notation on the last step. Although there is a formal sum over the index q present, in practice rotational symmetry requires m_f = m_i + q, which means that only one term will survive for a given pair \{m_i, m_f\}.

This is an obvious place where we can use the Wigner-Eckart theorem, to write this instead in terms of a single reduced matrix element \bra{n_f l_f} |\hat{\vec{r}}^{(1)}| \ket{n_i l_i} instead of calculating all of the terms separately.

31.6 Example: S \rightarrow P transitions in a magnetic field

Let’s have a closer look at the case of a transition in hydrogen with a weak magnetic field applied to split the states apart. In this case (known as Zeeman splitting, see Section 28.6), the appropriate quantum numbers are \ket{nljm}, and the energy levels are E_{njm} = E_n^{(0)} + \Delta_{jm}^{(1)} = -\frac{1}{n^2} {\rm Ry} + \frac{eB}{2m_e c} g_j \hbar m \equiv -\frac{1}{n^2} {\rm Ry} + E_B g_j m, defining E_B as the energy associated with the magnetic field and with g_j the Landé g-factor, g_j = \begin{cases} 1 + \frac{1}{2l+1}, & j = l + 1/2; \\ 1 - \frac{1}{2l+1}, & j = l - 1/2. \end{cases}

We’re implicitly working in the “anomalous Zeeman regime”, with the hierarchy \Delta E_0 \gg E_{\rm fs} \gg E_B. Fine structure is large enough that it picks out \ket{nljm} as the good basis and gives us the Landé g_j factors below, but small enough that we can safely neglect its contribution to the transition frequencies and to the dipole matrix elements themselves. If we dropped fine structure entirely, a good basis for a weak B field would instead be \ket{nlm_lm_s} (since \hat{L}_z + 2\hat{S}_z is already diagonal there).

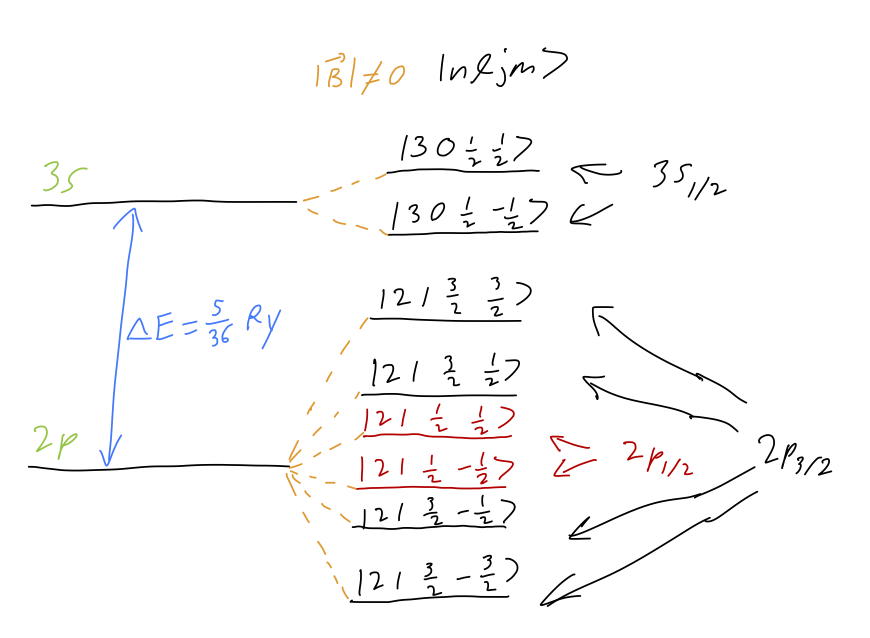

Before we think about transitions, let’s remind ourselves of what the spectrum looks like. For concreteness let’s say that we’re looking at the 3s \rightarrow 2p transition, although everything we’re about to do generalizes easily to other values of n. In modified spectroscopic notation (dropping the spin label which is always 2), the available energy levels are 3s_{1/2} (two states), 2p_{1/2} (two states), and 2p_{3/2} (four states). Each state is split completely, with g-factors of 2, 2/3, and 4/3 respectively. There is also a large splitting between the n=3 energy level and the n=2 levels. Here is a sketch of the energy levels available:

The dominant energy splitting is due to the difference in principal energy levels, \Delta E_0 = (\frac{1}{3^2} - \frac{1}{2^2}) Ry = 5/36 Ry. There are 12 total transitions, but some of them are forbidden: in particular, the two \ket{j=3/2, m = \pm 3/2} levels can’t be reached from \ket{j=1/2, m=\mp 1/2} due to the \Delta m selection rule. Thus, we expect 10 emission lines in total. (We are ignoring transitions between the two 2p levels; they are certainly possible due to the Zeeman splitting, but they will be in a completely different frequency range from the 3 \rightarrow 2 transitions.)

To compute the rates for the transitions, we need the relevant matrix elements. Invoking the Wigner-Eckart lets us write them all in terms of two reduced matrix elements: we have \bra{21\tfrac{1}{2} m_f} \vec{r}_q^{(1)} \ket{30\tfrac{1}{2} m_i} = \left\langle \tfrac{1}{2} 1; m_i q | \tfrac{1}{2} 1;\tfrac{1}{2} m_f \right\rangle \frac{\bra{n=2,j=\tfrac{1}{2}} |\vec{r}| \ket{n=3,j=\tfrac{1}{2}}}{\sqrt{2}}, \\ \bra{21\tfrac{3}{2} m_f} \vec{r}_q^{(1)} \ket{30\tfrac{1}{2} m_i} = \left\langle \tfrac{1}{2} 1;m_i q | \tfrac{1}{2} 1;\tfrac{3}{2} m_f \right\rangle \frac{\bra{n=2,j=\tfrac{3}{2}} |\vec{r}| \ket{n=3,j=\tfrac{1}{2}}}{2}.

Let’s denote the two reduced matrix elements as R_{1/2} \equiv \bra{n=2,j=\tfrac{1}{2}} |\vec{r}| \ket{n=3,j=\tfrac{1}{2}}, \\ R_{3/2} \equiv \bra{n=2,j=\tfrac{3}{2}} |\vec{r}| \ket{n=3,j=\tfrac{1}{2}}. To actually compute these reduced matrix elements, we need to compute one of the matrix elements in full, which means doing radial integrals. Here, we’ll stick to working out the relative intensity of the spectrum, which just depends on the Clebsch-Gordan coefficients.

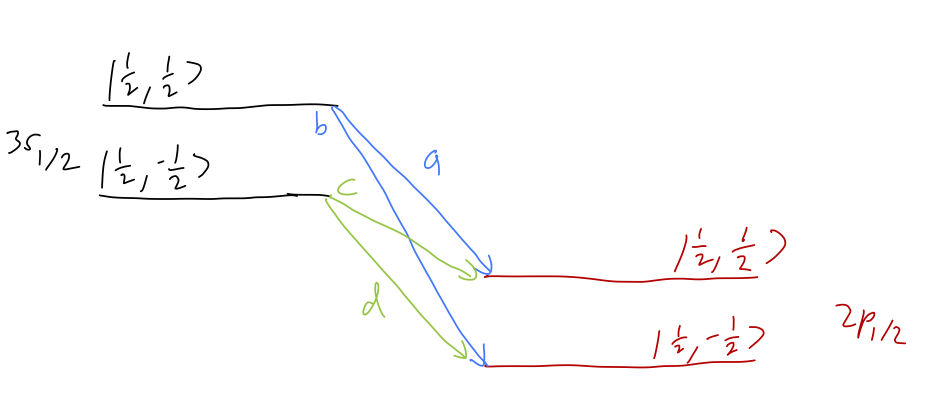

Let’s begin with the 3s_{1/2} \rightarrow 2p_{1/2} transitions. We have four possible transition lines that we’ll denote a,b,c,d:

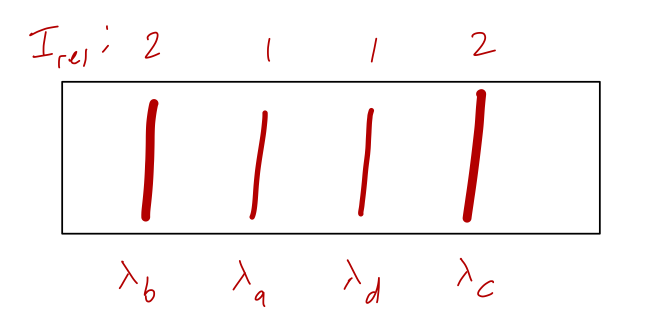

The corresponding matrix elements are, plugging in Clebsch-Gordans from a table, M_a = \frac{\left\langle \tfrac{1}{2} 1; \tfrac{1}{2}0 | \tfrac{1}{2} 1; \tfrac{1}{2} \tfrac{1}{2} \right\rangle}{\sqrt{2}} R_{1/2} = -\frac{1}{\sqrt{6}} R_{1/2}, \\ M_b = -\frac{1}{\sqrt{3}} R_{1/2}, \\ M_c = \frac{1}{\sqrt{3}} R_{1/2}, \\ M_d = \frac{1}{\sqrt{6}} R_{1/2}. To get the decay rates \Gamma, which are proportional to the intensities, we square these and add some other factors, for example \Gamma_a = \frac{4\alpha \omega_a^3}{3c^2} |M_a|^2 and so on. But if we want the relative intensity of the lines, everything cancels - including the reduced matrix element R_{1/2}. Only the ratios of Clebsch-Gordans squared matter. We can read off the result: I_a : I_b : I_c : I_d = 1 : 2 : 2 : 1. In other words, the two middle transition lines will be twice as bright as the other two. (Actually, this isn’t exactly right; the intensities also go as \omega^3, and the transition energies are slightly different. But they are all dominated by the energy-level change \Delta E_0, so the differences in frequency will be relatively small.)

If we were to look at the emission through a diffraction grating, we’ll be directly sensitive to the wavelength \lambda of each transition. What are the wavelengths corresponding to the transitions? First, we need the energy splittings. The Landé g-factors for these states are g_j = 2 for the {}^3S_{1/2} states, and g_j = \tfrac{2}{3} for {}^2P_{1/2}. Plugging in to the formula above for E_{njm}, we have \Delta E_a = E_B (2) (+\tfrac{1}{2}) - E_B (\tfrac{2}{3}) (+\tfrac{1}{2}) = +\frac{2}{3} E_B \\ \Delta E_b = +\frac{4}{3} E_B \\ \Delta E_c = -\frac{4}{3} E_B \\ \Delta E_d = -\frac{2}{3} E_B. Plugging in, \lambda_a = \frac{2\pi \hbar c}{\Delta E_a} = \frac{2\pi \hbar c}{\Delta E_0 + 2E_B/3} \\ \lambda_b = \frac{2\pi \hbar c}{\Delta E_0 + 4E_B/3} \\ \lambda_c = \frac{2\pi \hbar c}{\Delta E_0 - 4E_B/3} \\ \lambda_d = \frac{2\pi \hbar c}{\Delta E_0 - 2E_B/3}. So the wavelengths are ordered \lambda_b < \lambda_a < \lambda_d < \lambda_c. Assuming that the magnetic field contribution E_B is small, the approximate wavelength of all of them is \bar{\lambda} = \frac{2\pi \hbar c}{\Delta E_0} = \frac{2\pi (6.58 \times 10^{-16}\ {\rm eV} \cdot {\rm s}) (3 \times 10^8\ {\rm m/s})}{1.89\ {\rm eV}} \approx 660\ {\rm nm} which is red visible light. (Since we’ll use it in a moment, the corresponding angular frequency is \bar{\omega} = 2\pi c/\bar{\lambda} = 2.86 \times 10^{15}\ {\rm Hz}.) That means if we look through a diffraction grating, we’ll see a pattern like this:

Now suppose that we turn off our magnetic field and we’re just interested in the total rate \Gamma for the 3s \rightarrow 2p transition. This means that we have to sum and average over all of the possible initial and final states, as above: \Gamma_{3s \rightarrow 2p_{1/2}} = \frac{1}{2} \sum_{m_i} \sum_{m_f} \Gamma_{m_i \rightarrow m_f} \\ = \frac{2\alpha}{3c^2} (|M_a|^2 \omega_a^3 + |M_b|^2 \omega_b^3 + |M_c|^2 \omega_c^3 + |M_d|^2 \omega_d^3) \\ \approx \frac{2\alpha \bar{\omega}^3}{3c^2} |R_{1/2}|^2 \left( \frac{1}{6} + \frac{1}{3} + \frac{1}{3} + \frac{1}{6} \right) \\ = \frac{2\alpha \bar{\omega}^3}{3c^2} |R_{1/2}|^2.

Let’s evaluate the reduced matrix element to finish the calculation and get an actual number out. To find it, we invert the Wigner-Eckart theorem to write R_{1/2} = \frac{\sqrt{2}}{\left\langle \tfrac{1}{2} 1; m_i q | \tfrac{1}{2} 1; \tfrac{1}{2} m_f \right\rangle} \bra{2 1 \tfrac{1}{2} m_f} \hat{r}_q^{(1)} \ket{3 0 \tfrac{1}{2} m_i}. Now we’re free to pick any m_i, m_f, q that we want, as long as they satisfy the selection rule (if they don’t, we’ll know because the Clebsch-Gordan coefficient won’t exist.) Let’s take m_i = m_f = \tfrac{1}{2}, which then requires q=0: plugging in, R_{1/2} = -\sqrt{6} \bra{2 1 \tfrac{1}{2} \tfrac{1}{2}} \hat{r}_0^{(1)} \ket{3 0 \tfrac{1}{2} \tfrac{1}{2}}. The one remaining obstacle here is that this matrix element is written in the \ket{nljm} basis, but we only really know how to evaluate matrix elements as integrals in the \ket{nlm_lm_s} basis. (For fine structure we used various algebraic tricks to get around this.) Fortunately, we know how to change bases easily: \ket{3 0 \tfrac{1}{2} \tfrac{1}{2}} = \ket{300} \ket{\uparrow}, \\ \ket{2 1 \tfrac{1}{2} \tfrac{1}{2}} = \sqrt{\frac{2}{3}} \ket{211} \ket{\downarrow} - \sqrt{\frac{1}{3}} \ket{210} \ket{\uparrow} using the same C-G table again and splitting out the spin in the \ket{nlm_l m_s} states on the right to make them more easily distinguishable. Since the operator \hat{r} doesn’t talk to spin at all, the spin can’t change from initial to final state, and we have R_{1/2} = \sqrt{2} \bra{210} \hat{r}_0^{(1)} \ket{300} \\ = \sqrt{2} \int dr R_{21}^\star(r) R_{30}(r) r^3 \sqrt{\frac{1}{3}} \left\langle 01;00 | 01;10 \right\rangle \left\langle 01;00 | 01;10 \right\rangle using the identity we wrote out above to simplify the triple-Y integral. We need one more C-G coefficient, but this one is for addition of j=0, which means that the coefficent (which appears twice) is just 1, so at last, we have R_{1/2} = \sqrt{\frac{2}{3}} \int dr R_{21}(r) R_{30}(r) r^3. Going to Mathematica to evaluate this final integral, we have the result R_{1/2} \approx 0.766 a_0.

Finally, putting everything together and plugging in a_0 = 5.29 \times 10^{-11} m, \Gamma_{3s \rightarrow 2p_{1/2}} \approx \frac{2\alpha \bar{\omega}^3}{3c^2} |R_{1/2}|^2 \approx 2.1 \times 10^{6}\ {\rm Hz} or about 2.1 MHz. If we look this up in the NIST atomic spectra database, we find a transition frequency of 2.10 \times 10^6/s, so we’ve got it exactly right!

31.7 Line widths

There is one more important idea that we should discuss in the current context, which has to do with the lifetime of a state \ket{i}. If there are spontaneous transitions available from this state to some set of final states \{\ket{f}\}, then over time it will decay away. So far we know how to compute transition rates between states, but remaining in the same state requires us to revisit our Fermi’s golden rule calculation in a more careful way. As discussed previously, in quantum mechanics the initial state won’t obviously just irreversibly decay from \ket{i} \rightarrow \ket{f}: the reverse process \ket{f} \rightarrow \ket{i} is also allowed, so we can jump from \ket{i} \rightarrow \ket{f} \rightarrow \ket{i} and still remain in the initial state. The latter process is second-order in our perturbation (since it requires two transitions.)

Previously, I noted that constant perturbations in time-dependent perturbation theory can’t be thought of as truly constant; we should think of them being adiabatically switched on and off. To accomplish that more rigorously, let’s study a constant perturbation again but with an adiabatic damping factor applied: \hat{V}(t) = \hat{V} e^{\eta t}, with \eta a very small auxiliary variable that we will take to zero (carefully) to recover a constant perturbation. By construction this regulates things as t \rightarrow -\infty, which we’ll take to be our initial state; it blows up at t \rightarrow \infty, but we’ll just evolve to a finite time t and make sure we deal with the order of limits properly to get there.

31.7.1 Rederiving Fermi’s golden rule

Let’s start back at first-order again for a transition between states. For convenience, we’ll partly move to the interaction picture: we’ll compute the interaction transition amplitude U_{fi}^{(I)}(t), which absorbs the two phases that aren’t being integrated over: U_{fi}^{(I)}(t) = \delta_{fi} - \frac{i}{\hbar} \int_{t_i}^t dt' e^{i\omega_{fi} t'} e^{\eta t'} \bra{f} \hat{V} \ket{i} \\ = \delta_{fi} - \frac{i V_{fi}}{\hbar} \frac{1}{i\omega_{fi} + \eta} e^{(i\omega_{fi} + \eta)t}. Let’s assume that f \neq i to begin with, and square this to get the transition probability, p_{fi}(t) = |U_{fi}^{(I)}(t)|^2 = \frac{|V_{fi}|^2}{\hbar^2 (\omega_{fi}^2 + \eta^2)} e^{2\eta t}, and then the rate is W_{fi} = \lim_{t\rightarrow \infty} p_{fi}(t) / t. (Notice that the use of the interaction-picture amplitude is now equivalent to the regular amplitude, since the difference is just two phases that square away in the probability.) But now we have to be careful with the order of limits; if we try to set \eta \rightarrow 0 first, the time dependence vanishes and we just get W_{fi} = 0. If we take the t limit first, it seems to diverge. But we can apply l’Hopital’s rule under the time limit to get \lim_{t \rightarrow \infty} \frac{e^{2\eta t}}{t} = \lim_{t \rightarrow \infty} 2\eta e^{2\eta t}. With this replacement, now we can take the \eta limit, but things are still delicate. We now have W_{fi} = \lim_{t \rightarrow \infty} \lim_{\eta \rightarrow 0} \frac{\eta}{\omega_{fi}^2 + \eta^2} \frac{2 |V_{fi}|^2}{\hbar^2} e^{2\eta t}. The first term is zero unless \omega_{fi} = 0, in which case it is infinite. This should remind you of a delta function, and in fact if we use the integral definition \delta(x-a) = \frac{1}{2\pi} \int_{-\infty}^\infty dk e^{ik(x-a)}, it’s straightforward to show that \lim_{\eta \rightarrow 0} \frac{\eta}{\omega_{fi}^2 + \eta^2} = \frac{1}{2} \lim_{\eta \rightarrow 0} \int_{-\infty}^\infty dk e^{ik\omega_{fi}-\eta|k|} = \pi \delta(\omega_{fi}).

Taking this limit, the remaining time-dependence goes away and we recover the familiar result for the golden rule, absorbing one factor of \hbar back into the delta function: W_{fi} = \frac{2\pi}{\hbar} |\bra{f} \hat{V} \ket{i}|^2 \delta(E_f - E_i).

31.7.2 Second-order transition amplitude

Now let’s go back to the case for the amplitude to remain in the initial state. In this case, to see the physics we’re interested in, we will need to work to second order in perturbation theory. We’ll continue to compute interaction-picture transition amplitudes, so the expression we have is: U_{ii}^{(I)} = 1 -\frac{i}{\hbar} V_{ii} \int_{t_i}^t dt' e^{\eta t'} + \left( -\frac{i}{\hbar} \right)^2 \int_{t_i}^t dt_1 \int_{t_i}^{t_1} dt_2 e^{\eta(t_1 + t_2)} \sum_n |\bra{n} \hat{V} \ket{i}|^2 e^{-i\omega_{ni} t_1} e^{i\omega_{ni} t_2}. In the first integral, the energy-dependent phases cancel with the same initial and final state, and so the first-order term evaluates to simply (with t_i \rightarrow -\infty) U_{ii}^{(I,1)} = -\frac{i}{\hbar} \frac{V_{ii}}{\eta} e^{\eta t}. For the second order term, it will take a bit more work. Doing the t_2 integral first, we have \frac{-1}{\hbar^2} \sum_n |V_{ni}|^2 \frac{1}{i\omega_{ni} + \eta} \int_{t_i}^{t} dt_1 e^{-i\omega_{ni} t_1} e^{(\eta + i\omega_{ni})t_1} e^{\eta t_1} \\ = -\frac{1}{2\hbar^2} \sum_n \frac{|V_{ni}|^2 / \eta }{i \omega_{ni} + \eta} e^{2\eta t}, with the frequency factors cancelling out in the dt_1 integral.

Now, at this point we can recombine things and square to get a probability. If we do so, we find some cancellation leads to a very simple result for the probability, through second order: p_{i \rightarrow i}(t) = 1 - \frac{1}{\hbar^2} \sum_{n \neq i} \frac{|V_{ni}|^2}{\omega_{ni}^2 + \eta^2} e^{2\eta t} = 1 - \sum_{n \neq i} p_{i \rightarrow n}(t). This is just the expected simple relation from conservation of probability, so it’s a good check on our setup so far. However, to see the interesting physics we’re looking for, we need to look more closely at what happens to the amplitude itself when we take our limits.

Doing this requires a bit of math which isn’t especially enlightening, so I’ll include it as an aside and skip to the key results.

We would like to take the limit t \rightarrow \infty and see something constant happening, since we are applying a constant perturbation (as \eta \rightarrow 0.) However, we can’t just define a rate in the same way as for transitions to different states; a rate for something not happening doesn’t really make sense at face value. Instead, let’s adopt the ansatz that the amplitude evolves in time as a simple exponential, obeying the equation i\hbar \frac{dU_{ii}}{dt} = \Delta_i U_{ii}, with d\Delta_i/dt = 0, so that U_{ii}(t) = e^{-i\Delta_i t/\hbar}. If this equation is true, then we can compute the constant directly from our formula for the transition amplitude. First, let’s write out the full amplitude again and simplify a bit: U_{ii}^{(I)}(t) = 1 - \frac{i}{\hbar \eta} V_{ii} e^{\eta t} -\frac{1}{2\hbar^2} \frac{|V_{ii}|^2}{\eta^2} e^{2\eta t} - \frac{1}{2\hbar^2 \eta} \sum_{n \neq i} \frac{|V_{ni}|^2}{i\omega_{ni} + \eta} e^{2\eta t}, splitting out the same-state term n=i from the sum. Proceeding with the ansatz, we see that

\Delta_i = i\hbar \frac{dU_{ii}/dt}{U_{ii}} \\ = i\hbar \frac{-\frac{i}{\hbar} V_{ii} e^{\eta t} - \frac{1}{\hbar^2 \eta} |V_{ii}|^2 e^{2\eta t} - \frac{1}{\hbar^2} \sum_{n \neq i} \frac{|V_{ni}|^2}{i\omega_{ni} + \eta} e^{2\eta t} + ...}{1 - \frac{i}{\hbar \eta} V_{ii} e^{\eta t} + ...} where we only need the first term in the denominator because we should work at consistent order in perturbation theory, meaning we should series expand the denominator and only keep terms up to order V^2. Doing so, we find \Delta_i = V_{ii} e^{\eta t} + \frac{i}{\hbar \eta} V_{ii}^2 e^{2\eta t} - \frac{i}{\hbar \eta} |V_{ii}|^2 e^{2\eta t} - \frac{i}{\hbar} \sum_{n \neq i} \frac{|V_{ni}|^2}{i \omega_{ni} + \eta} e^{2\eta t} + ... This will be constant once we take \eta \rightarrow 0, so the ansatz appears to have worked! The middle two terms cancel, but the last term is a bit delicate: so far, then, we have \Delta_i = \bra{i} \hat{V} \ket{i} + \lim_{\eta \rightarrow 0} \sum_{n \neq i} \frac{|\bra{n} \hat{V} \ket{i}|^2}{E_i - E_n + i \hbar \eta} e^{2\eta t}. Before we continue to simplify, we should notice that if we work at first order in perturbation theory, our result simply gives us a pure phase, which we recognize as the first-order energy correction in time-independent perturbation theory! In fact, if we go back from interaction picture to Schrödinger picture for the amplitude, we have U_{ii}(t) = \exp \left( -i (E_i + \Delta_i) t/\hbar \right).

The upshot of the derivation above is that the amplitude for \ket{i} \rightarrow \ket{i} evolves in time as a pure phase of the form U_{ii}(t) = \exp \left( -i (E_i + \Delta_i) t/\hbar \right), where \Delta_i = \bra{i} \hat{V} \ket{i} + \lim_{\eta \rightarrow 0} \sum_{n \neq i} \frac{|\bra{n} \hat{V} \ket{i}|^2}{E_i - E_n + i \hbar \eta} e^{2\eta t}.

This is just saying that the system evolves with a pure energy-dependent phase, but the energy is corrected, with the total energy E_i + \Delta_i bearing a very strong resemblance to the time-independent perturbation theory energy correction. In fact, we see the first term \bra{i} \hat{V} \ket{i} is exactly the first-order energy correction. So if we only go to first order in perturbation theory, the statement is that \ket{i} evolves into itself as a pure phase according to its corrected energy.

31.7.3 Decay and natural width

Now let’s consider the second term; it looks similar to the second-order time-independent correction, but the limit we still need to take is delicate. At first glance, it may seem like we can simply set \eta = 0 and recover the second-order time-independent energy correction. If our Hilbert space is both finite and non-degenerate, then this is true and we’re done: \Delta_{i}^{(\rm finite)} = \bra{i} \hat{V} \ket{i} + \sum_{n \neq i} \frac{|\bra{n} \hat{V} \ket{i}|^2}{E_i - E_n}. However, this also means the dynamics is trivial: we find p_{ii}(t) = |U_{ii}(t)|^2 = 1, so our system remains permanently in state \ket{i}, just evolving by a phase. This is exactly what we should expect from the golden rule, since an adiabatic perturbation requires energy conservation, and we’ve specified that all of the other states have different energies.

If the Hilbert space is finite and some of the other states are degenerate, then things still aren’t very interesting: we know how to deal with that already, by diagonalizing \hat{H}_0 + \hat{V} over the degenerate subspace, so that the would-be divergent terms have zero matrix elements.

The only case which will lead to something interesting is the case where there are an infinite number of degenerate states, in which case we should replace our sum with an integration over the density of states: \sum_{n \neq i} (...) \rightarrow \int_0^\infty dE_n \rho_f(E_n) \frac{|V_{ni}|^2}{E_i - E_n + i\hbar \eta} e^{2\eta t}. Now the limit \eta \rightarrow 0 is delicate again, since it is regulating the divergence which occurs when E_n = E_i. Taking the limit requires some use of complex analysis; I’ll rely on the derivation in appendix A of Merzbacher and just skip to the resulting limit identity, which is only valid under an integral: \lim_{\epsilon \rightarrow 0^+} \frac{1}{x+i\epsilon} = \mathcal{P} \frac{1}{x} - i\pi \delta(x), where \mathcal{P} means “evaluate the Cauchy principal value of the integral with the function set to 1/x”. (This formula is known as the “Sokhotski-Plemelj identity”.) Since everything else in the integral is real, we can read off the real and imaginary parts of the correction term: {\rm Re}(\Delta_i^{(2)}) = \mathcal{P} \int_0^\infty dE_n \frac{\rho_f(E_n) |V_{ni}|^2}{E_i - E_n}, \\ {\rm Im}(\Delta_i^{(2)}) = -\pi \int_0^\infty dE_n \rho_f(E_n) |V_{ni}|^2 \delta(E_i - E_n) or restoring the sum, {\rm Im}(\Delta_i^{(2)}) = \frac{\hbar}{2} \sum_{n \neq i} W_{i \rightarrow n}. We won’t go into the details of calculating these expressions in detail, but the key physical fact that we have obtained is that at second order, in systems where continuous decay channels are available from the state \ket{i}, the time evolution is now of the form \ket{i(t)} = U_{ii}(t) \ket{i} = \exp \left( -\frac{i}{\hbar} E_i t - \frac{\Gamma t}{2\hbar} \right) \ket{i}, so that now, p_{ii}(t) = |U_{ii}(t)|^2 = e^{-t / \tau}, with the lifetime set by the rates into other states, \frac{1}{\tau} = \sum_{n} W_{ni}. In other words, we have now effectively picked up an imaginary correction to the energy, which has led to a decay lifetime for the state \ket{i}. Ordinarily, we would think of an imaginary energy as something which violates unitarity; if we just consider the \ket{i} state alone, then it is a violation of unitarity, because our particle can “disappear” by transitioning into another state. If we keep track of the entire system, then of course unitarity is still satisfied and there are not really any imaginary energies present.

To interpret the imaginary correction physically, it’s useful to move from the time domain to the energy domain. Take t=0 as the moment the decay begins, so the survival amplitude is U_{ii}(t) = e^{-iE_i t/\hbar}\, e^{-\Gamma t/(2\hbar)}\, \Theta(t), and Fourier transform: \tilde{U}_{ii}(E) = \int_0^\infty dt\ e^{iEt/\hbar}\, U_{ii}(t) = \frac{i\hbar}{(E - E_i) + i\Gamma/2}. The modulus squared is a Lorentzian, |\tilde{U}_{ii}(E)|^2 = \frac{\hbar^2}{(E - E_i)^2 + \Gamma^2/4}, peaked at E = E_i with full width at half maximum equal to \Gamma. A perfectly stable state (\Gamma \rightarrow 0) would give a pure phase e^{-iE_i t/\hbar} in the time domain and thus a delta function \delta(E - E_i) in the energy domain; the decay broadens that delta function into a Lorentzian of width \Gamma. Since \Gamma = \hbar/\tau, this is another manifestation of energy-time uncertainty: the line width \Delta E satisfies \Delta E = \hbar / \tau \Rightarrow (\Delta E) \tau = \hbar.

The inverse lifetime \Gamma can be observed in practice, and represents a fundamental limit on the precision with which we can measure the energy E of an unstable state, known as the natural width. There are other effects that contribute to observed line widths in the laboratory, related to the fact that we don’t just see them in situations with adiabatic potentials, but more realistically with photons and radiation involved. But the story gets much more complicated, and we have now seen the basic phenomenon at work, so we’ll stop the discussion here.